Introduction of Cache Memory with it’s Mapping Function

Last Updated: Sep 19, 2013

In a computer system the program which is to be executed is loaded in the main memory. Processor then fetches the code and data from the main memory to execute the program. The DRAMs which from the main memory are slower devices. So it is necessary to insert wait states in memory read / write cycles. This reduces the speed of execution. To speed up the process, high speed memories such as SRAMs. In the memory system small sections of SRAM is added along with main memory, is referred to as cache memory.

Program Locality:

Prediction of next memory address from the current memory address is known as Program Locality.

This enables the cache controller to get a block of memory instead of getting just a single address.

- It is thus possible enough to build a computer that uses only static RAM.

- This would be very efficient.

- No cache would be needed.

- Very expensive (too costly).

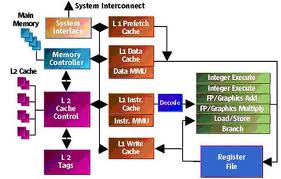

Cache:

- Small amount of fast memory

- It is placed between the normal main memory and CPU (central processing unit).

- May be located on CPU chip or module

Cache operation – overview:

- Requests regarding the contents of memory location are done by CPU.

- Check cache for this data

- If present, get from cache (fast)

- If not present, read required block from main memory to cache

- Then deliver from cache to CPU

- Cache deals with the tags so as to identify which block of main memory is present in each cache slot.

Cache Design:

- Size

- Mapping Function

- Replacement Algorithm

- Write Policy

- Block Size

- Number of Caches

Size does matter

- Cost

More cache is expensive

- Speed

More cache is faster (up to a point)

Checking cache for data takes time

Mapping Function:-

- Cache of 64kByte

- Cache block of 4 bytes

i.e. cache is 16k (214) lines of 4 bytes

- 16MBytes main memory

- 24 bit address

(224=16M)

I. Direct Mapping:

- Each block of main memory maps to only one cache line

i.e. if a block is in cache, it must be in one specific place

- Address is in two parts

- Least Significant bits help in identifying the unique word.

- Most Significant bits help in specifying one memory block.

- The MSBs (Most significant bits) are split into a cache line field r and a tag of s-r (most significant)

- No two blocks (different) in the same line (one lined) can have the same Tag field.

- Contents of cache are checked by finding line and checking Tag.

Direct Mapping Summary:

- Address length = (s + w) bits

- Number of addressable units = 2s+w words or bytes

- Block size = line size = 2w words or bytes

- Number of blocks in main memory = 2s+ w/2w = 2s

- Number of lines in cache = m = 2r

- Size of tag = (s – r) bits

II. Associative Mapping:

This mapping scheme is used to improve cache utilization, but at the expense of speed. In associative mapping there are 12 bits cache line tags, rather than 5 i.e. 212 = 4096 memory lines, & any memory line can be stored in any cache line.

III. Set-associative Mapping:

This scheme is a compromise between the direct and associative schemes. In set associative mapping, the cache is divided into sets of tags and the set number is directly mapped from the memory address i.e. Tag field identifies one of the 26 = 64 different memory lines in each of the

26 = 64 different Set values.

In set-associative mapping, if the no. of lines per set is n, then this type of mapping is known as n-way associative mapping.

Readers can give their suggestions / feedbacks in the given below comment section to improve the article. We’ll be glad to have it.

Related Topic:

INTRODUCTION TO WEB SERVERS AND ITS CONFIGURATION

« High Level Data Link Control- HDLC Protocol List of Top CBSE Schools in Meghalaya »

Tell us Your Queries, Suggestions and Feedback