Radial Basis Function Network (RBF)

Last Updated: Sep 16, 2013

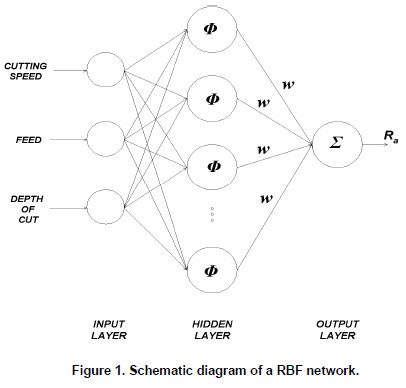

A Radial Basis Function network is an artificial forward single hidden layer feed neural network that uses in the field of mathematical modeling as activation functions.

The output of the RBF network is a linear combination of neuron parameters and radial basis functions of the inputs. This network is used in time series prediction, function approximation, system control and classification.

Cover’s theorem on the separability of patterns:

This theorem justify the use of a linear output layer in an RBF network. According to this theorem, the transformation from the input space to the feature space is nonlinear and the dimensionality of the feature space is high compared to that of the input space, so there is a high likelihood that a non separable pattern classification task in the input space is transformed into a linearly separable one in the feature space.

Interpolation problem:

It requires every input vector to be mapped exactly onto the corresponding target vector. The interpolation problem is to determine the real coefficient and the polynomial term. The function is called a radial basis function if the interpolation problem has a unique solution.

Supervised learning as an ill-posed hyper surface reconstruction:

The supervised part of the training procedure for the RBF network is concerned with determining suitable values for the weight connections between the hidden and the output unit layers. The learning of a neural network is viewed as a hyper surface reconstruction problem, is an ill-posed inverse problem for following reasons-

i) Lack of information in the training data as need to reconstruct the input-output mapping uniquely.

ii) Presence of noise in the input data adds uncertainty.

Regularization theory-

Regularization technique is a way of controlling the smoothness properties of a mapping function. It involves adding to the error function an extra term which is designed to penalize mappings which are not smooth. Instead of restricting the number of hidden units, an alternative approach for preventing over fitting in RBF networks comes from the regularization theory.

Regularization network-

RBF network can be seen as special case of regularization network. RBF network have sound theoretical foundation in regularization theory. RBF network fit naturally into the framework of the regularization of interpolation/approximation task. For these problems, regularization means the smoothing of the interpolation/approximation curve, surface. This approach to RBF network, is also known as regularization network.

Generalized Radial Basis Function networks (RBF):

RBF network have good generalization ability and a simple network structure that avoids unnecessary and lengthy calculation. The modified or generalized RBF network has following characteristics-

i) Gaussian function is modified

ii) Hidden neuron activation is normalized

iii) Output weights are the function of input variables

iv) A sequential learning algorithm is presented.

Regularization parameter estimation:

Unknown weights and the error variance are estimated by regularization. The regularization parameter have an effect on reducing the variances of the network parameter estimates. The maximum penalized likelihood estimates the weight parameter in the RBF network and regularization parameter is given as β=Өα2 and α2 is error variance.

RBF networks- Approximation properties:

RBF are embedded in a two layer neural network, where each hidden unit implements radial activated function. The output unit implement weighted sum of hidden unit outputs. While the input into RBF network is nonlinear, the output is often linear. Owing to their nonlinear approximation capabilities, RBF network are able to model complex mappings.

RBF networks and multilayer Perceptron comparison:

Similarities-

i) They are both non-linear feed forward network

ii) They are both universal approximates

iii) They are both used in similar application areas

Dissimilarities-

An RBF network has a single hidden layer, whereas multilayer perceptron can have any number of hidden layers.

Kernel regression and RBF networks relationship:

The theory of kernel regression provides another viewpoint for the use of RBF network for function approximation. It provides a framework for estimating regression function for noisy data using kernel density estimation technique. The objective of function approximation is to find a mapping from input space to output space. The mapping is provided by forming the regression, or conditional average of target data, conditioned on input variables. The regression function is known as the Nadaraya-Watson estimator.

Learning strategies:

Common learning strategies are orthogonal least squares method and hybrid learning method. In OLS the hidden neuron, RBF centers, are selected one by one in a supervised manner. Computationally more efficient hybrid learning method combines both self organized and supervised learning strategies.

Related Questions and Answer

Q1.What are similarities of RBF and MLPs network?

Ans- Both network are non linear feedforward network, universal approximators, and used in similar application areas.

Q2. Write difference between RBF and MLPs network?

Ans- RBF has single hidden layer. MLPs have more than one hidden layer.

Q3. Classify the learning strategies for RBF network?

Ans- It is classified as-i)RBF network with fixed number of RBF centers

ii) RBF employing supervised procedure for selecting fixed number of RBF centers

Q4. What is Cover’s theorem?

Ans- According to this theorem, a complex pattern classification problem cast in a high dimensional space nonlinearly is more likely to be linearly separable than in a low dimensional space. It tells us that we can map the input space to a high dimensional space, in which a linear function will be found.

Readers can give their suggestions / feedbacks in the given below comment section to improve the article.

Related Topics:

Perceptron with its Optimization Techniques

Dynamically Driven Recurrent Networks

« Central Board of Secondary Education Maths Olympiad Concept of Sampling And its Techniques »

Tell us Your Queries, Suggestions and Feedback